New possibilities with an API gateway

Background

Integration capabilities and developer friendly APIs are extremely important for a SaaS platform like ours. Customers and partners expect access to their data, e.g. to utilize the full potential of having an HR master data system. We have a large number of applications in our suite, most of them exposing and consuming internal and public APIs. This number is growing, as new applications are developed, companies acquired, and existing functionality is split into microservices. This is why we started looking at API gateway products last year.

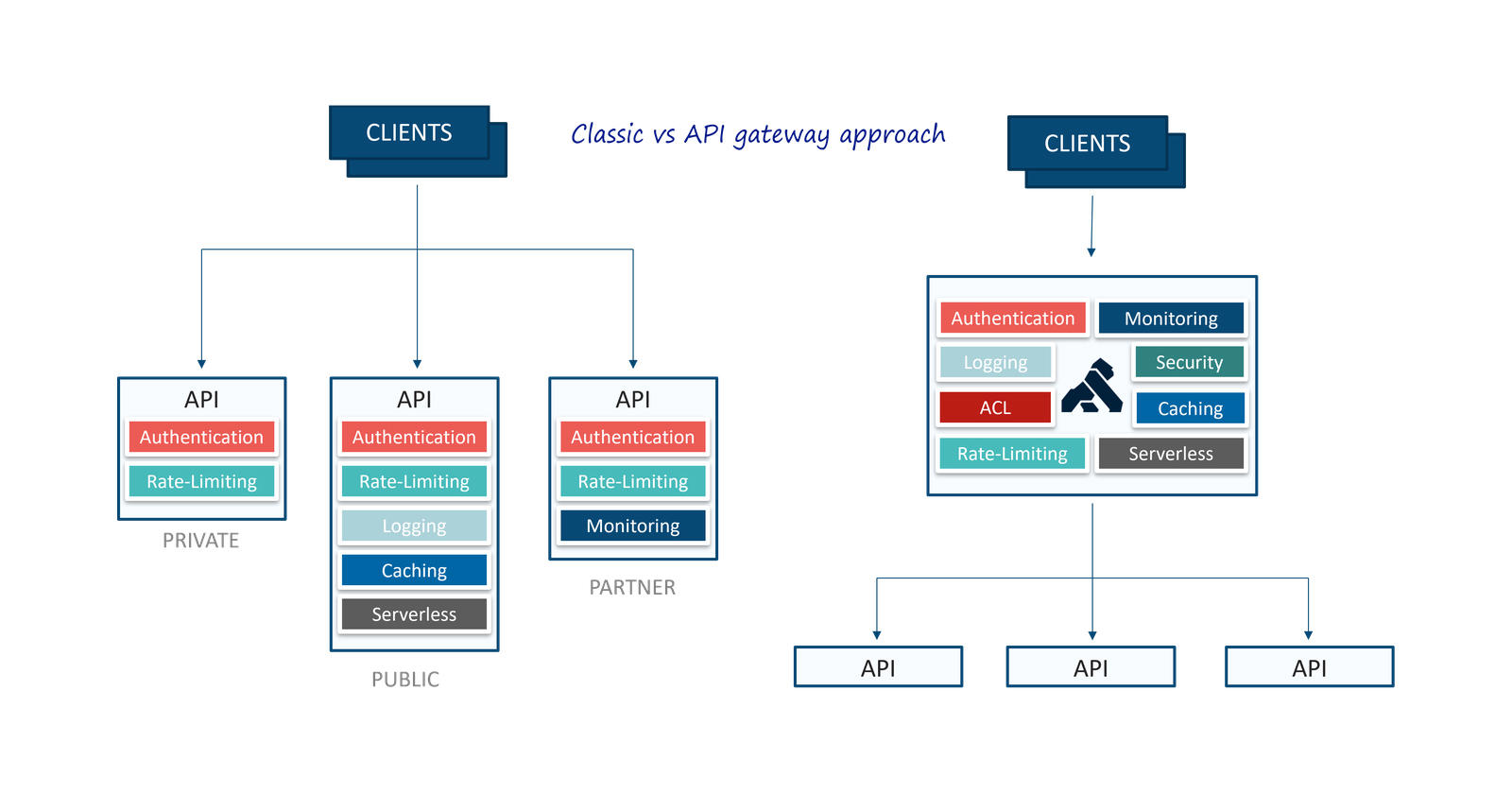

An API gateway provides a single, unified API entry point across one or more internal APIs. In addition it may handle common tasks that are used across a system of API services, such as authentication, ACL, logging, monitoring, caching, rate-limiting and throttling. These common gateway features are illustrated here, and compared to the classic approach were these features need to be implemented in each individual service:

Based on requirements gathered during spring 2021, followed by an evaluation of three product candidates (Azure API Management, Google APIGee and Kong), we decided to go for Kong Gateway (Kong Open-Source API Management Gateway for Microservices ). Kong Gateway (which is built on top of NGINX) is available in Free, Plus and Enterprise modes, and for our use case we are able to start using the Free version.

During autumn 2021 we implemented and tested Kong in our hybrid infrastructure. Key requirements from DevOps were hybrid environment support, good developer experience, infrastructure and configuration as code. A lot of effort has been put down in scripting the entire environment using Terraform and Azure Pipelines, and also to maintain the gateway services, routes and plugins through config files and Azure Pipelines.

Technical overview

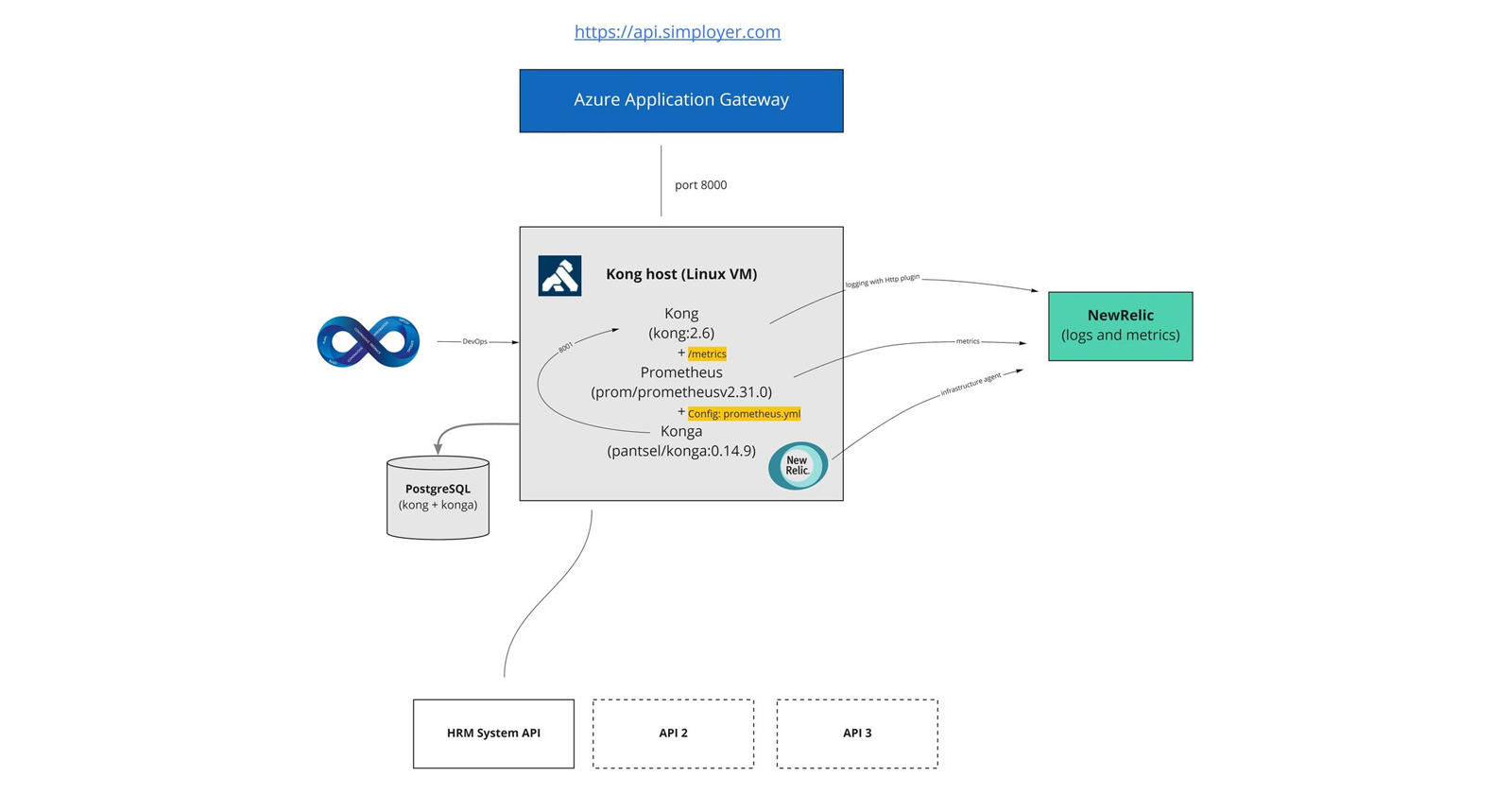

The API gateway is hosted in Azure, in a cross-premises virtual network with access to internal resources. The public address to the API gateway is https://api.simployer.com. We have an Azure Application Gateway in front that acts as public endpoint for DNS, SSL termination and potential load balancing and canary deployment.

We chose the containerized installation of Kong, which makes the installation host agnostic. It is easy to spin up a development environment on a local Docker Desktop. We started using Azure Container Instances, but met some limitations that forced us to deploy the same environment on an Azure Linux virtual machine. In the future it may be moved to Kubernetes, who knows?

Since we are on the free/open source software (OSS) version, there is no administration user interface or monitoring out of the box. That's why we need some extra containers; Konga for administration user interface and Prometheus to write metrics to New Relic (observability platform). On the Linux VM we also have the New Relic agent installed for host monitoring. In addition we send traffic log data to New Relic, data that we hope will give much insights and value.

The gateway configuration is stored in an Azure managed PostgreSQL database. Access to this database from Azure Pipelines goes through the Kong admin API.

Future plans

We have great plans and ambitions to unleash the potential in the Kong API gateway and tasks related to this. Here are some highlights:

- Refine company API guidelines. Naming conventions, security standards etc.

- Merged and uniform API documentation

- Expose new APIs through gateway

- Utilize Kong metrics and logs in New Relic dashboards and alerts

- Common security standards across exposed APIs

- Introduce consumers and differentiated access plans

- Add plugins for e.g. rate limiting on specific services, routes or consumers.

- Self-served admin center to manage API access etc.

- AKS hosting

More blog posts will follow as we are moving on!